Blog

Innovating Insurance with Microservices – Part 3

In Part 2 of this blog series, we shared how a microservices architecture is applicable for the insurance industry and how it can play a big role in insurance transformation. This is especially true because the insurance industry is transitioning to a platform economy, with heavy emphasis on the interoperability of capabilities across a diverse ecosystem of partners. In this segment, we will share our views on best practices for adopting a microservices architecture in order to build new applications and transform existing ones.

Now that we have made a sufficient case exploring microservice architecture’s abilities to bring speed, scale and agility to IT operations, we should now contemplate how we can best think about microservices. How can we transform existing monoliths into a microservices architecture? Although the approach for designing microservices may vary by organization, there are best practices and guidelines which can assist teams in the midst of making these decisions.

How many microservices are too many?

Going “too micro” is of the biggest risks for organizations that are still new to microservices architectures. If a “thesis driven” approach is adopted, then there will be a tendency to build many smaller services. “Why not?” you may ask, “After all, once we buy into the approach, shouldn’t we just go ‘all in’?”

We encourage insurers to be careful and test the waters. We would caution against starting out with too many smaller services, due to the increased complexity of mixed architectures, the steep curve of upfront design, and the significant changes in development processes as well as a lack of DevOps preparedness. We suggest a “use- case-driven” approach. Focus on urgent problems, where rapid changes are needed by the business to overcome system inhibiting issues, and break the monolith module into multiple microservices that will serve current needs and not necessarily finer microservices based on assumptions about future needs. Remember, if we can break the monolith into microservices, then later we can make microservices more granular as needed, instead of incurring the complexity of too many microservices without an assurance of future benefits.

What are the constraints (LOC, language etc.) for designing better microservices?

There is a lot of myth about the number of lines of code, programming languages, and permissible frameworks (just to name a few) for designing better microservices. There is an argument that if we do not set fixed constraints upon numbers of lines of code per microservice, then the service will eventually grow into a monolith. Although it is a valid thought, an arbitrary size limit on lines of code will create too many services and introduce costs and complexity. If microservices are good, will “nanoservices” be even better? Of course not. We must ensure that the costs of building and managing a microservice are less than the benefit it provides — hence, the size of a microservice should be determined by its business benefit instead of lines of code.

Another advantage of a microservices architecture is the interoperability between microservices, regardless of underlying programming language and data structure. There is no one framework, programming language or database that is better suited than another for building microservices. The choice of technology should be made based on underlying business benefits that a particular technology provides for accomplishing the purpose of microservices. Preparing for this kind of flexible framework will give insurers vital agility moving forward.

How does it impact development processes?

A microservices architecture promotes small, incremental changes that can be deployed to production with confidence. Small changes can be deployed quickly and tested. Using a microservices architecture naturally leads to DevOps. The goal is to have better deployment quality, faster release frequency, and improved process visibility. The increased frequency and pace of releases means you can innovate and improve the product faster.

Putting a DevOps pipeline with Continuous Integration and Continuous Deployment (CI/CD) into practice requires a great deal of automation. This requires developers to treat Infrastructure as Code and Policy as Code, shifting the operational concerns about managing infrastructure needs and compliance from production to development.

It is also very important to implement real-time, continuous monitoring, alerting and assessment of the infrastructure and application. This will ensure that the rapid pace of the deployment remains reliable and promotes consistent, positive customer experiences.

In order to validate that we are on right path, it is important to capture some matrices on the project. Some of the KPIs we like to look at are:

- MTTR – The mean time to respond as measured from the time a defect was discovered until the correction was deployed in production.

- Number of Deploys to Production – These are small, incremental changes being introduced into production through continuous deployment.

- Deployment Success Rate – Only 0.001% of AWS deployments cause outages! When done properly, we should see a very high, successful deployment ratio

- Time to first commit – This is the time it takes for a new person joining the team to release code to production. A shorter period of time indicates well-designed microservices that do not carry the steep learning curve of a monolith.

Principles for Identifying Microservices and Examples

More important than the size of the microservice, is the internal cohesion it must have, and its independence from other services. For that, we need to inspect the data and processing associated with the services.

A microservice must own its domain data and logic. This leads to a Domain-Driven Design pattern. However, it is often possible to have a complex domain model that can be better presented in interconnected multiple small models. For example, consider an insurance model, composed of multiple smaller models, where the Party model can be used as ClaimParty and also as Insured (and various others…). In such a multi-model scenario, it is important to first establish a context with the model called Bounded Context that closely governs the logic associated with the model. Defining the microservice for a Bounded Context is a good start, since they are closely related.

Along with Bounded Context, aggregates that are used by the domain model which are loosely coupled and driven by business requirements are also good candidates for microservices, as long as they exhibit the main design tenants of the microservices; for example, services for managing Vehicles as an aggregate of a Policy object.

While most microservices can be easily identified by following the domain model analysis, there are a number of cases where the business processing itself is stateless and does not result in a modification of the data model itself, for example, identifying the risk locations within the projected path of a hurricane. Such stateless business processes, which follow the single responsibility principle, are great candidates for microservices.

If these principles are applied correctly, it will lead to loosely coupled and independently deployable services that follow the single responsibility model without causing chattiness across the microservices. They can be versioned to allow client upgrades, provide fallback defaults and be developed by small teams.

Co-Existing with Legacy Architecture

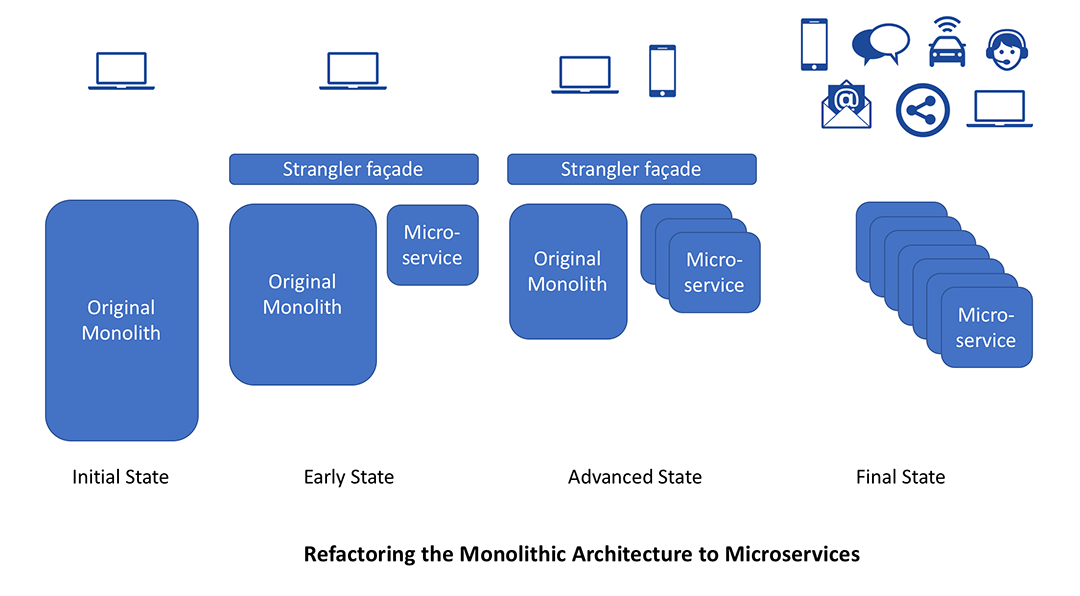

Microservices provide a perfect tool for refactoring the legacy architecture. This can be done by applying the Strangler Pattern. This gives a new life to Legacy applications by first moving the business functions that will benefit the most gradually as microservices. Applying this pattern requires a façade that can intercept the calls to the legacy application. A modern digital frontend, which can offer a better UX and provides connectivity to a variety of backends by leveraging EIP, can be used as strangler façade to connect to existing legacy applications.

Over time, those services can be built directly using a microservices architecture, by eliminating calls to legacy application. This approach is more suited to large, legacy applications. Within smaller systems that are not very complex, the insurer may be better off rewriting the application.

How to make organizational changes to adopt microservices-driven development

Adopting microservices-driven development requires a change in organization culture and mindset. The DevOps practice tries to shift siloed operation responsibilities to the development organization. With the successful introduction of microservices best practices, it is not uncommon for the developers to do both. Even when the two teams exist, they have to communicate frequently, increase efficiencies, and improve the quality of services they provide to customers. The quality assurance, performance testing and security teams also need to be tightly integrated with the DevOps teams by automating their tasks in the Continuous Delivery process.

Organizations need to cultivate a culture of sharing responsibility, ownership and complete accountability in microservices teams. These teams need to have a complete view of the microservice from a functional, security, and deployment infrastructure perspective, regardless of their stated roles. They take full ownership for their services, often beyond the scope of their roles or titles, by thinking about the end customer’s needs and how they can contribute to solving those needs. Embedding the operational skills within the delivery teams is important to reduce potential friction between the development and operations team.

It is important to facilitate increased communication and collaboration across all the teams. This could include the use of instant messaging apps, issue management systems, and wikis. This also helps other teams like sales and marketing, thus allowing the complete enterprise to align effectively towards project goals.

As we have seen in these three blogs, the microservices architecture is an excellent solution to legacy transformation. It solves a number of problems and paves the path to a scalable, resilient system that can continue to evolve over time without becoming obsolete. It allows rapid innovation with positive customer experience. A successful implementation of the microservices architecture does however require:

- A shift in organization culture, moving infrastructure operations to development teams while increasing compliance and security

- Creation of a shared view of the system and promoting collaboration

- Automation, to facilitate continuous integration and deployment

- Continuous monitoring, alerting and assessment

- A platform that can allow you to gradually transition your existing monolith to microservices and also natively support Domain-Driven Design

In our fourth and last blog in this series, we will illustrate how the Digital1st platform simplifies the development of microservices apps while fostering rapid innovation. We’ll discuss how it will allow insurers to connect to various API pipelines, from Insurtechs to datasources or even an insurer’s own legacy apps.

Please share your views on this exciting topic in the comments section. We would enjoy hearing your perspective!